Unlocking the MLS Database with APIs

Unlock the MLS database with APIs. Learn how to access property data, gain real estate insights, and integrate MLS data for your business needs.

.png)

A real estate transaction database has become essential infrastructure for data-driven property decisions, yet most teams struggle to find APIs that deliver comprehensive records without unpredictable costs or technical friction.

If your team needs reliable access to housing transaction data, prioritize providers that offer transparent pricing, unrestricted throughput, and nationwide coverage under a single integration.

Accessing accurate property records has shifted from competitive advantage to operational necessity. Investment firms, lenders, insurers, and PropTech platforms all depend on structured property data to power everything from underwriting models to fraud detection systems. A well-designed real estate transaction database can reduce research time from days to seconds while ensuring consistency across analytical workflows.

The challenge is that these databases vary dramatically in coverage, pricing structure, and ease of integration. Some providers charge per API request regardless of whether that request returns useful data. Others restrict access by metro area or property type, forcing teams to manage multiple contracts as they scale. This guide walks through what a real estate transaction database actually contains, how to evaluate providers, and what to look for when integrating property data into your workflows.

A real estate transaction database aggregates records of property sales, ownership transfers, and related financial events into a queryable system. These databases pull from public records, county assessors, deed filings, and other authoritative sources to create a consolidated view of property history.

The core value lies in historical depth. While listing feeds show what properties are currently on the market, transaction databases reveal what has already happened: previous sale prices, ownership chains, tax assessment changes, and mortgage activity. This historical context is essential for valuation models, risk assessment, and market trend analysis.

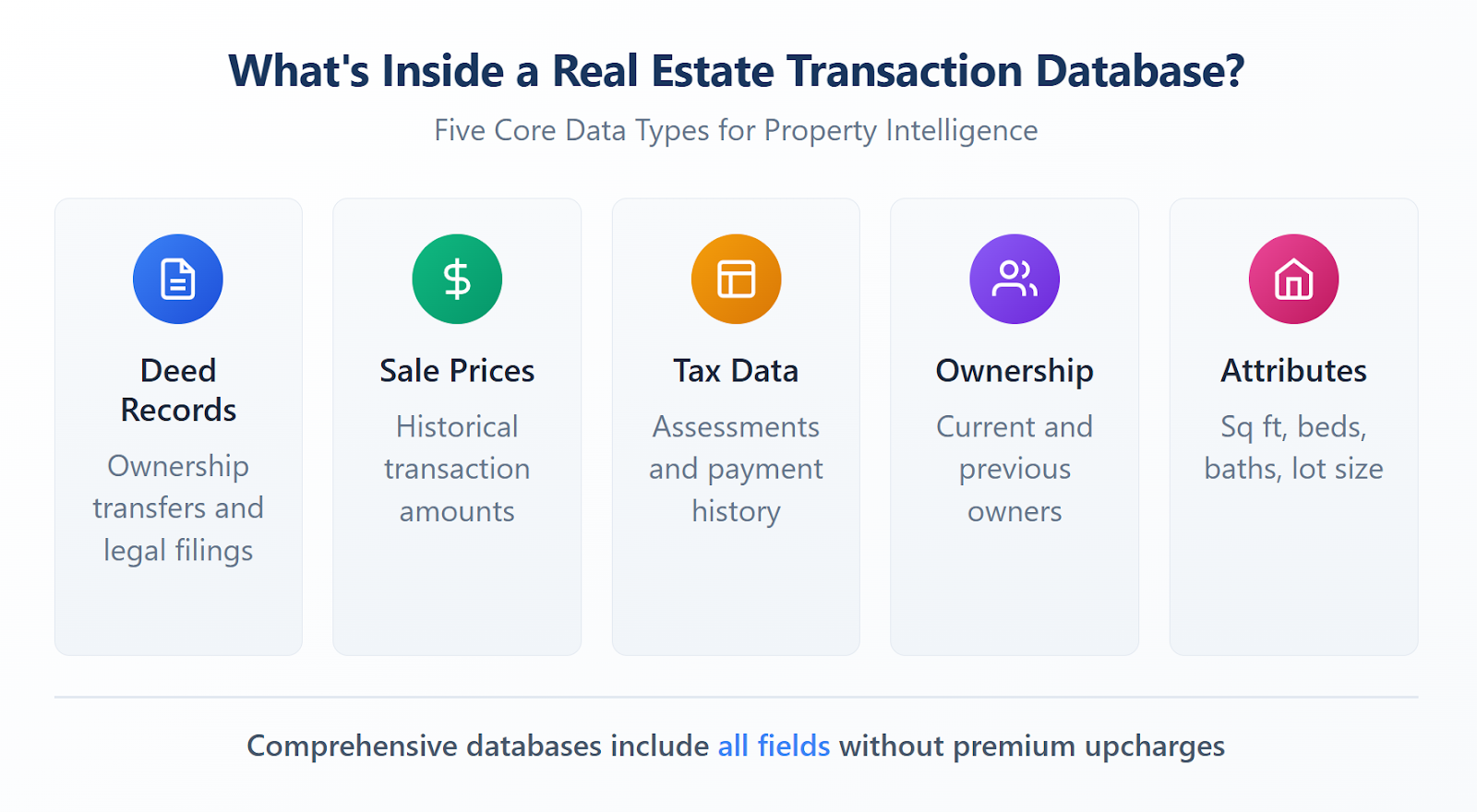

Transaction databases typically include deed records documenting ownership transfers, sale prices and dates, tax assessment histories, mortgage and lien information, and property characteristics tied to each transaction. The combination of these fields allows analysts to track how property values have changed over time, identify patterns in ownership turnover, and flag anomalies that might indicate fraud or data quality issues.

Listing data captures properties actively marketed for sale or rent. It reflects asking prices, agent information, and marketing descriptions. Transaction data, by contrast, documents completed events. It shows what buyers actually paid, when ownership changed hands, and how assessors valued the property at various points in time.

For investment analysis, transaction data provides the ground truth that listing data cannot. A property might be listed at $500,000, but transaction records reveal that comparable homes in the same neighborhood have consistently sold for $420,000 over the past three years. This distinction matters for anyone building pricing models, conducting due diligence, or validating automated valuations.

Manual data collection does not scale. Pulling transaction records county by county, reformatting spreadsheets, and reconciling inconsistent schemas consumes hours that technical teams cannot afford. APIs eliminate this friction by delivering standardized records on demand.

Speed is the most obvious benefit. A well-designed property data API returns results in seconds rather than days. Teams can query millions of records, filter by geography or property type, and receive structured responses ready for analysis. This acceleration compresses project timelines and allows for iterative testing that manual processes cannot support. According to recent industry research, two-thirds of real estate professionals cite time savings as their primary motivation for adopting new technology, underscoring how critical efficient data access has become.

Integration matters just as much. When transaction data flows directly into existing systems through an API, it eliminates the copy-paste errors and version control problems that plague spreadsheet-based workflows. Analysts can build dashboards that refresh automatically, data scientists can train models on current information, and operations teams can trigger alerts based on real-time changes.

The applications for a real estate database API extend across multiple industries. Investment firms use transaction histories to identify undervalued properties and validate acquisition targets. Lenders pull ownership records and tax assessments during underwriting to verify borrower information and assess collateral risk.

Insurance companies cross-reference transaction data to detect fraudulent claims and ensure accurate property valuations for coverage calculations. Fraud prevention teams at financial institutions flag shipping addresses that match vacant or recently sold properties, reducing losses from stolen goods delivered to empty homes.

PropTech startups build consumer-facing tools on top of transaction data, powering everything from home value estimators to neighborhood trend reports. Each of these use cases demands reliable, comprehensive data delivered through an interface that integrates cleanly with production systems.

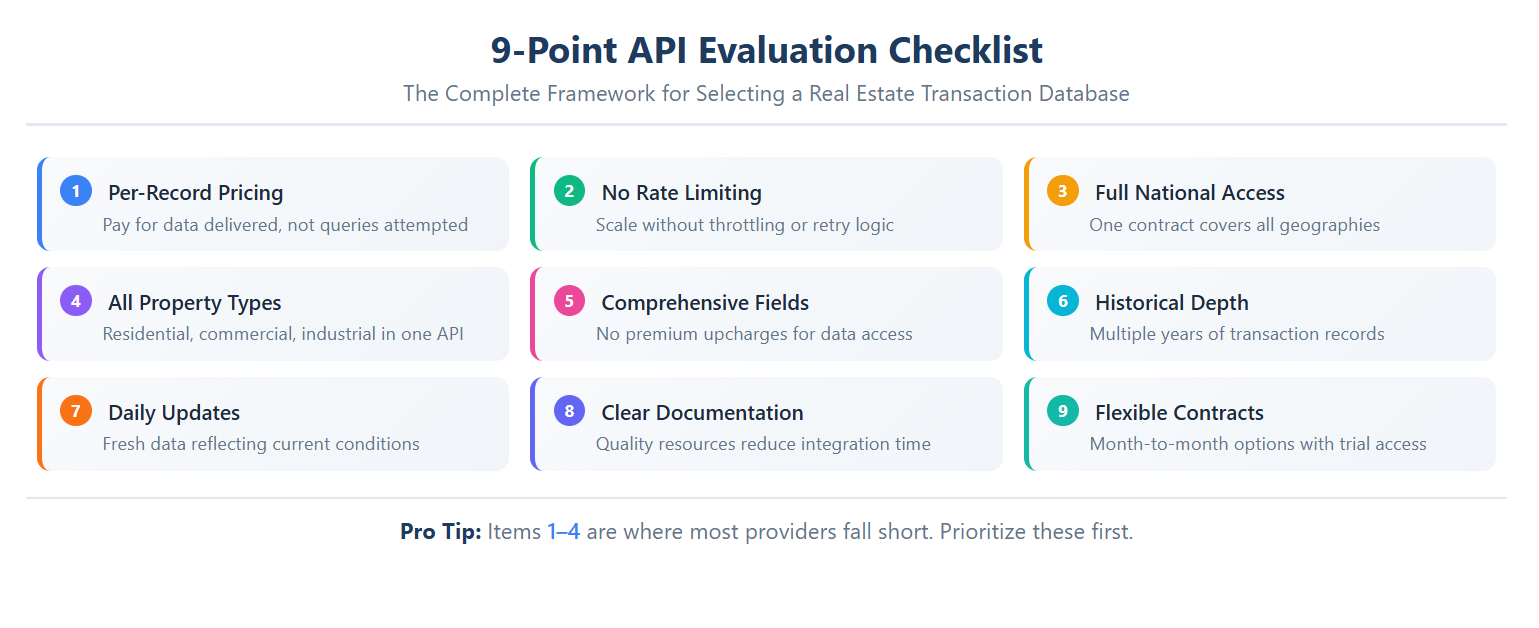

Choosing a real estate database API requires more than comparing feature lists. The differences that matter most often hide in pricing structures, access restrictions, and operational constraints that only surface during implementation or scale. The sections below break down the three areas where providers diverge most significantly.

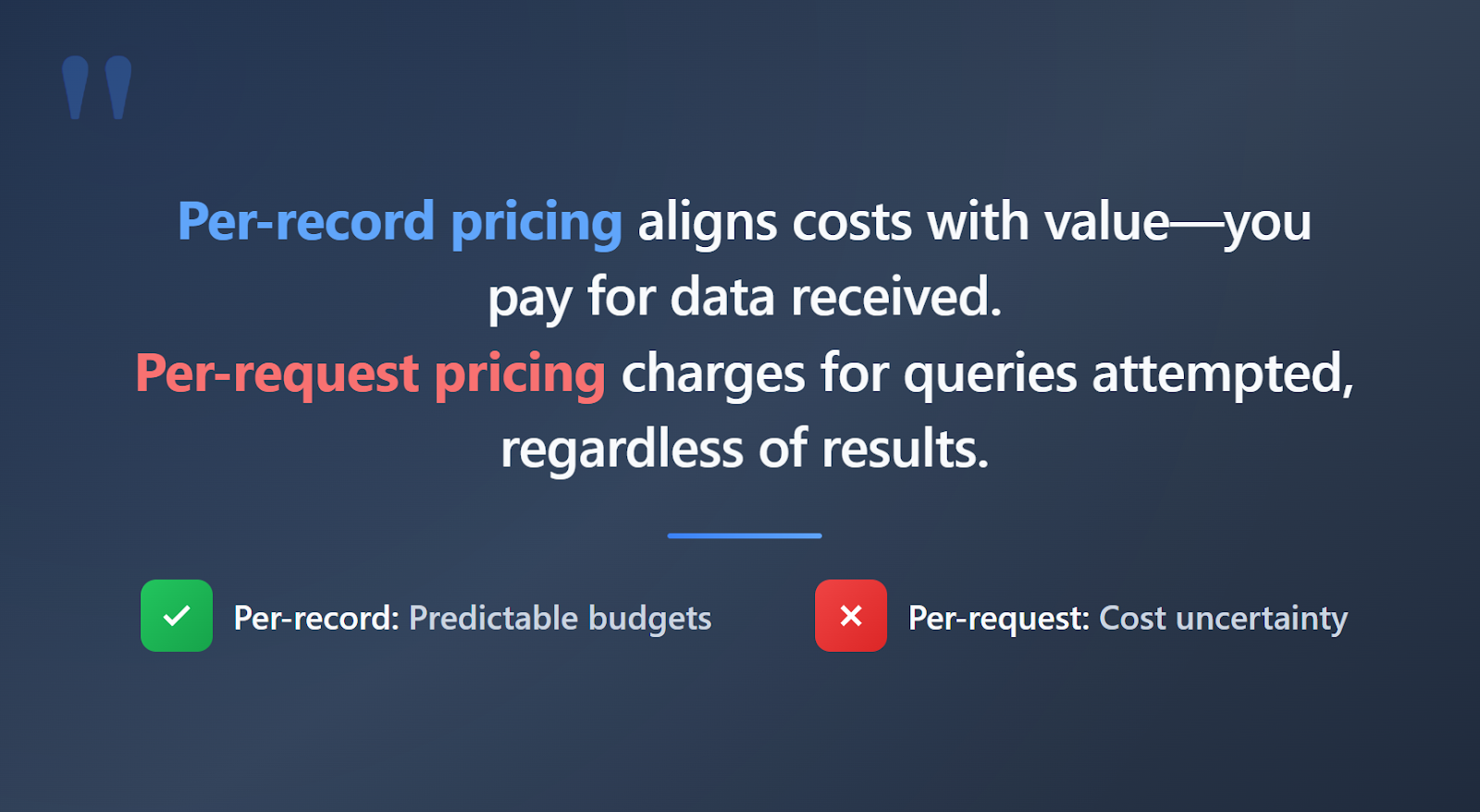

Pricing structures vary significantly, and the wrong model can turn a promising integration into a budget headache. Per-request pricing charges for every API call regardless of outcome. If a query returns no results or incomplete data, you still pay. This model creates uncertainty, especially for exploratory analysis or applications with variable query volumes.

Per-record pricing aligns costs with value. You pay for the data you actually receive, not for the queries you attempt. This approach makes budgeting predictable and eliminates the penalty for thorough searches. When evaluating providers, ask specifically how they handle queries that return no results and whether pricing scales linearly with data volume.

Hidden costs also deserve scrutiny. Some providers charge premium rates for specific fields like tax histories or ownership records. Others require separate contracts for different data types. Look for providers that include comprehensive field access without per-attribute upcharges.

Rate limiting restricts how many requests you can send within a given time window. Providers impose these limits to manage server load, but they create real problems for developers. When your application hits a rate limit, you must build retry logic, implement request queuing, and handle failures gracefully. This adds engineering complexity that has nothing to do with your actual product.

For high-volume applications, rate limiting becomes a scaling bottleneck. Teams end up throttling their own systems to stay within provider constraints rather than optimizing for user experience or analytical throughput. Providers that operate without artificial request caps remove this friction entirely, allowing your infrastructure to scale without external constraints.

Some providers package data by metropolitan area or region, requiring separate contracts for each geography. This creates friction as your business expands. A company that starts with coverage in three metros might need data from twelve within a year. Regional packaging turns scaling into a procurement exercise rather than a technical one.

Property type restrictions cause similar problems. A provider focused exclusively on residential single-family homes leaves gaps for teams that need commercial, industrial, or multi-family data. Maintaining separate integrations for different property types increases maintenance burden and introduces inconsistencies in data schemas.

Full national access with all property types under a single integration eliminates these constraints. Your queries can span any geography and any property category without renegotiating contracts or managing multiple vendor relationships.

The sections above cover pricing, throughput, and coverage in depth. This checklist pulls those criteria together with additional practical factors to give you a complete evaluation framework:

Integration complexity varies based on your existing infrastructure and use case requirements. However, the general process follows a consistent pattern that most technical teams can execute without specialized expertise.

Authentication typically involves API keys or tokens that identify your account and authorize requests. Most providers issue these credentials through a web portal after account creation. Store these tokens securely and implement rotation procedures if your security requirements demand it.

Query construction depends on the specific API design, but most property data APIs accept geographic filters, property type specifications, and date ranges. Start with narrow queries that return small result sets to verify that responses match your expectations. Expand scope gradually as you confirm data quality and schema compatibility.

A typical workflow begins with defining your search criteria. Suppose you need transaction records for single-family homes in Texas from the past two years. You would construct a query specifying the state, property type, and date range, then submit it to the API endpoint.

The response returns structured data, usually in JSON or CSV format, containing all matching records with their associated fields. Your application parses this response, extracts the relevant information, and stores or processes it according to your business logic.

For bulk operations, many providers support download endpoints that queue large result sets for asynchronous retrieval. This approach handles datasets too large for synchronous responses without timing out or overwhelming your application.

What types of records are included in a real estate transaction database? Transaction databases typically include deed records, sale prices, ownership histories, tax assessments, mortgage information, and property characteristics. The specific fields vary by provider, so verify that coverage matches your requirements before integrating.

How is per-record pricing different from per-request pricing? Per-request pricing charges for every API call regardless of whether it returns useful data. Per-record pricing charges only for the actual data delivered. This distinction significantly impacts budget predictability, especially for applications with variable query patterns.

Can I access both residential and commercial property data through a single API? Some providers require separate integrations or contracts for different property types. Others offer unified access to residential, commercial, and industrial records under one integration. Consolidated access reduces maintenance complexity and ensures consistent data schemas across property categories.

How frequently is transaction data updated? Update frequency varies by provider. Some refresh data weekly or monthly, while others provide daily updates. For time-sensitive applications like underwriting or fraud detection, prioritize providers with more frequent update cycles.

The demand for reliable housing transaction data continues to grow as more industries recognize its value for decision-making, risk management, and product development. Industry analysts project the PropTech sector will expand at an 11.9% compound annual growth rate through 2032, driven largely by demand for programmatic data access. As data and technology become top spending priorities for real estate leaders, the gap between organizations with reliable data infrastructure and those without will continue to widen.

Selecting the right provider determines whether your team captures that advantage or struggles with workarounds and limitations. The evaluation criteria outlined here, from pricing transparency to geographic coverage, should guide your assessment of any potential partner.

Datafiniti offers a property data API built around the principles that matter most: per-record pricing that aligns costs with value, no rate limiting to constrain your throughput, nationwide coverage across residential, commercial, and industrial properties, and comprehensive field access without hidden fees. To explore how structured property data can support your specific use case, reach out to the Datafiniti team and request a demo.

Unlock the MLS database with APIs. Learn how to access property data, gain real estate insights, and integrate MLS data for your business needs.

Discover how property APIs with ownership details empower real estate tech startups. Learn about data integration, risk mitigation, and driving business value.

Unlock the value of product catalog sync for product managers. Streamline data, improve decisions, and reduce costs with real-time insights.

Learn about product data webhooks, their components, and how they enable real-time updates for business intelligence and workflow automation.

Unlock insights with product data API integration. Essential for analysts & product managers to streamline data access & enhance product strategy.

Learn about product data APIs, their benefits, and how they drive business growth. Explore integration and advanced use cases.

Learn what ecommerce data vendors do, their services, and how to choose the right one for your business growth.

Compare product data providers. Learn what to look for in data quality, structure, and integration features.

Learn how to leverage real-time product data APIs for e-commerce, competitive analysis, and AI. Get instant access to clean, structured product data.

Find the best product data API with real-time updates, comprehensive coverage, and a user-friendly portal. Explore features to look for.

Access property data with a powerful property database API. Explore listings, market analysis, investment opportunities, and more. Get started today!

Unlock commercial real estate insights with a powerful API. Access property data, streamline workflows, and enhance investment strategies.

Learn how to get a real-time product feed using an API. Access, leverage, and ensure accuracy of product data for your business needs.

Learn how to gather and analyze competitor pricing data to inform your business strategy. Understand key components and ethical considerations.

Enhance your product data with comprehensive enrichment. Discover insights, drive growth, and choose the right approach for your business.

.png)

.png)

.png)

.png)

.png)

Learn how to leverage a product catalog API for business growth. Discover data quality, access methods, and strategy for your product catalog API.

Optimize your ecommerce product data feed for growth. Learn strategies, leverage technology, and ensure data quality for better customer experience and AI initiatives.

Explore the benefits and integration of a product search API. Streamline your product discovery and leverage data for business growth.

.png)

.png)

.png)

.png)

.png)

MLS API vs IDX: Explore the differences in real estate data access, retrieval, and integration. Understand which solution fits your needs.

Compare web scraping vs real estate API for data acquisition. Learn the pros, cons, and best use cases for each method.

Unlock housing sales analytics insights with Datafiniti. Explore property data, market trends, and advanced techniques for strategic decisions.

Leverage the property valuation API for real estate insights. Access comprehensive property data for diverse applications with Datafiniti.

Learn about product data APIs explained. Discover how to access, integrate, and utilize product data for e-commerce, analytics, and more.

Unlock ecommerce data with APIs for business insights, product catalog enrichment, and competitive analysis. Explore data via portal or API.

Explore housing sales API data for insights. Access property data, integrate into applications, and gain business intelligence. Get started today!

Access, analyze, and use real estate ownership data at scale. Learn how to find, process, and leverage this crucial information for business insights.

Unlock opportunities with bulk real estate transaction data. Learn how to access, analyze, and leverage property data for investing, marketing, and more.

Explore what a property sales database is, its core components, how to access data, and key use cases for real estate analysis and more.

Unlock insights with housing transaction data. Analyze markets, investments, sales, and risk. Get comprehensive property data for informed decisions.

Explore real estate transaction databases: understand data components, access methods, and leverage property data for insights and advanced applications.

Understand IDX vs MLS API differences. Learn about data access, integration, and how Datafiniti's solutions empower real estate professionals.

Explore the MLS database API: understand its components, benefits, and how to access real estate data for various applications. Learn about its core functionality and technical aspects.

Learn how a property database API can help real estate pros analyze trends, monitor listings, and optimize strategies. Get data insights.

Explore what a residential property API is, its features, benefits, and real-world applications for real estate professionals and investors.

Explore commercial real estate API functionality, data integration, and use cases. Learn how to leverage property, business, and people data for insights.

Learn about MVP data integration, its components, benefits, and strategies for accessing and utilizing data resources effectively.

Learn how to choose the best property data API. Explore features, providers, pricing, and integration for real estate insights.

Explore real estate database API options. Learn about data quality, features, and how to choose the right provider for your needs.

.png)

Understand how a product data API works, its key features, integration methods, and applications for e-commerce and business intelligence.

Explore how data aggregation platforms work, their capabilities, and applications. Learn to choose and implement the right platform for your business intelligence needs.

Discover why property data aggregation is crucial for businesses. Streamline access, empower functions, enhance risk management, and drive strategic decisions with authoritative insights.

.jpeg)

.jpeg)

Discover the best MLS data API features, including real-time updates, bulk downloads, and flexible filtering for property data.

Explore the functionality and benefits of a product data API. Learn how to integrate, leverage, and choose the right provider for your business insights.

Understand the difference between Product Search API and Product Data API. Learn how to leverage product data for business intelligence and analytics.

Access real estate transaction data via API. Explore property insights, sales, underwriting, and advanced applications with our authoritative guide.

Explore the benefits of a real estate MLS API for enhanced data access, streamlined workflows, and market responsiveness. Learn about key features and use cases.

Explore the MLS database API for comprehensive property data access. Learn about its core functionality, key features, and integration into real estate technology.

Explore the capabilities of a property data API. Understand its core functionality, key features for developers, and how to access property information at scale for business insights.

.jpeg)

Choosing a real estate API based on price alone can backfire. Learn how pricing models work, uncover hidden costs, and evaluate the true total cost before you build.

.jpeg)

Choosing the right property market API is critical for investment platforms. Learn how to evaluate data depth, coverage, freshness, and integration quality before you commit.