.png)

Best Sources for Bulk Real Estate Transaction Data

Key Takeaways

Bulk real estate transaction data powers investment models, underwriting systems, and PropTech platforms, but only when the source covers the entire market without geographic gaps.

- Transaction data from county recorders and tax assessors is the authoritative foundation, but aggregating it at national scale requires a commercial provider.

- Providers that package access by region force teams to manage multiple contracts, creating fragmentation that compounds as portfolios scale.

- The fields that matter most go beyond sale price: ownership chain, recording dates, parcel identifiers, and grantee/grantor data are what make bulk data actionable.

- A housing sales API built on a property sales database with true national coverage and residential, commercial, and industrial records eliminates the need to layer multiple sources.

Before committing to any bulk data provider, confirm that their coverage is genuinely national. Marketing claims are easy to make. Coverage gaps surface in production.

Real estate transaction volume tells you almost everything that matters about a market. According to CBRE's Q4 2025 capital markets report, U.S. commercial real estate investment totaled $499 billion in 2025, a 22% increase over 2024 and the second consecutive year of growth. Add residential transaction activity on top of that, and the data trail generated across the U.S. property market each year is enormous.

For any developer, analyst, or data team building tools on top of that trail, the challenge is the same: how do you access bulk real estate transaction data at scale without patching together regional sources, managing multiple vendor contracts, or paying for records you never receive?

The answer depends less on which providers you consider and more on how you evaluate them. Teams that approach a property sales database evaluation the same way they evaluate a point solution end up with the same problem they started with: data that covers their current market but breaks when they try to grow.

This guide walks through where bulk real estate transaction data comes from, what fields actually matter for downstream applications, why national coverage is the selection criterion that separates scalable solutions from short-term fixes, and what to ask any provider before signing.

What Does Bulk Real Estate Transaction Data Actually Include?

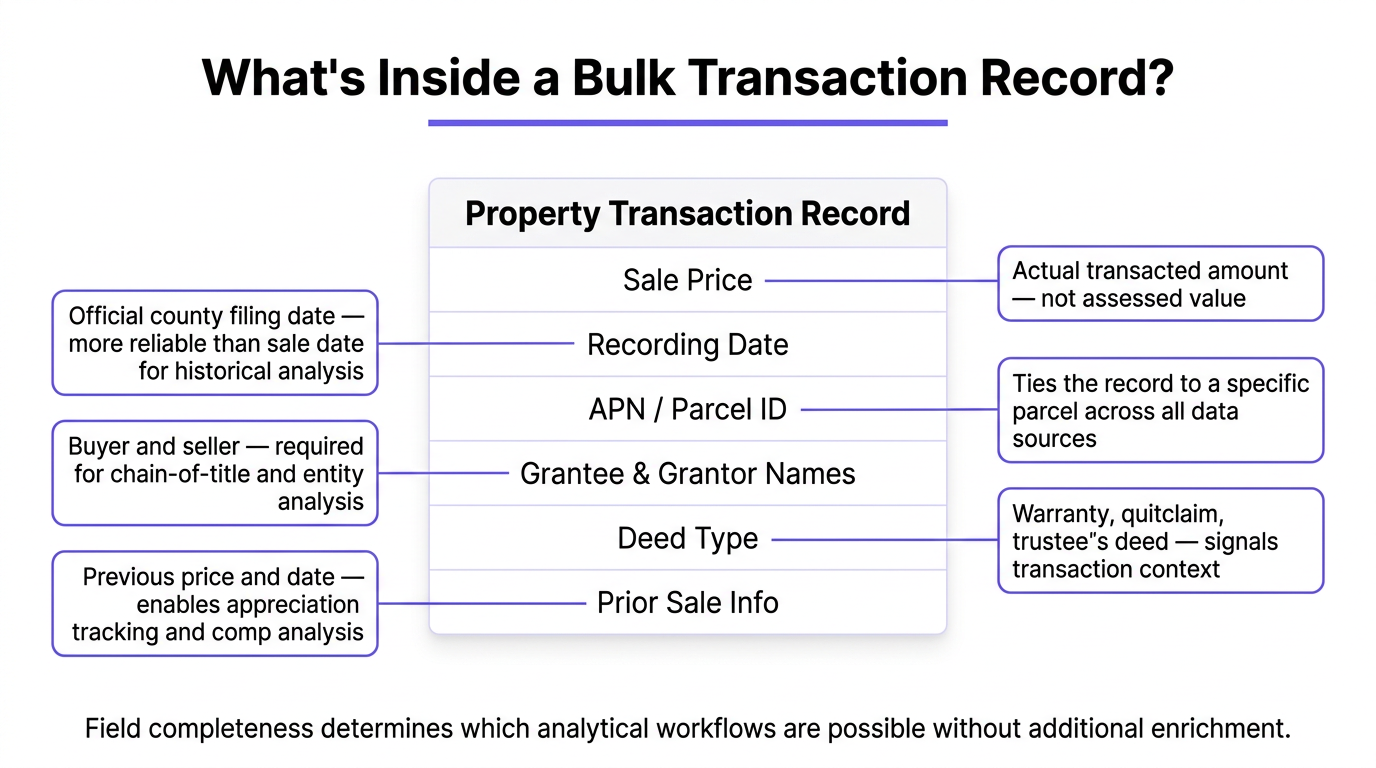

The term gets used loosely. A provider might describe their product as a complete transaction database while delivering records that include little more than sale price and address. Before evaluating sources, it is worth being specific about which fields matter for the use cases that drive demand for bulk access.

Core Transaction Fields

At minimum, a usable bulk real estate transaction data record includes the sale price, recording date, document number, and the legal description or APN (assessor parcel number) that ties the transaction to a specific parcel. Recording date is often more useful than sale date for historical analysis because it captures when the transfer was officially documented at the county level. Document numbers enable cross-referencing with deed records when you need to verify specifics or pull the original filing.

Many use cases also require buyer and seller name fields, referred to in deed language as grantee and grantor. These are essential for ownership chain analysis, portfolio tracking, and any application that tries to identify the entity behind a transaction rather than just the address. Entity resolution, the process of connecting an LLC or trust name to a beneficial owner, requires this data as a starting point.

Deeper records include mortgage and lien data alongside the transaction event, deed type classifications (warranty deed, quitclaim, trustee's deed, and so on), and prior sale information for the same parcel. The fields available through a well-structured housing sales API directly affect which analytical workflows are possible without additional enrichment from a second source.

Real Estate Ownership Data and Chain of Title

Real estate ownership data is closely related to transaction data but serves a distinct purpose. Where transaction records capture events, ownership data captures the current state: who holds the deed, how long they have held it, what the ownership structure looks like, and whether there are encumbrances that affect the title. Ownership duration is a key signal for investment targeting. Properties held for a decade or more by individual owners tend to attract investor attention precisely because long hold periods can indicate motivation to sell or flexibility on price that recently transacted properties do not have.

Chain of title, the sequential history of ownership transfers, requires that transaction records be complete enough to reconstruct the full sequence. Gaps in public records, which are common in counties with inconsistent digitization, can break the chain and require fallback logic or manual verification. Commercial providers that aggregate from multiple source types, including both recorder data and tax assessor rolls, are better positioned to fill those gaps than providers relying on a single upstream source.

The field completeness of ownership records also varies by property type. Residential parcels tend to have more standardized documentation because of the mortgage process, which requires clean title. Commercial and industrial properties often involve more complex ownership structures with entities, trusts, and holding companies that require additional parsing to identify the actual beneficial owner.

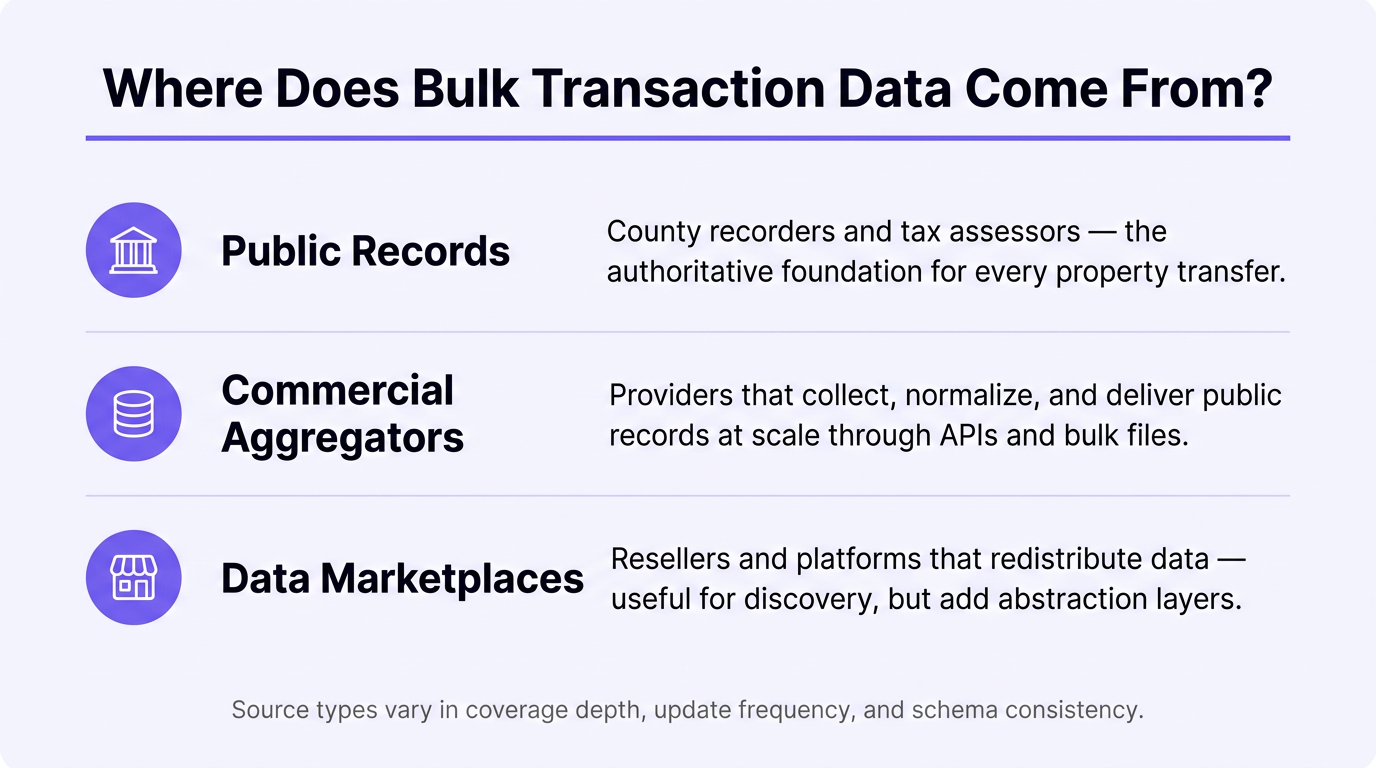

Where Does Bulk Real Estate Transaction Data Come From?

Understanding the source layer matters because it determines data freshness, completeness, and the coverage gaps you will encounter in production. Providers that source data from the same upstream channels will have similar strengths and weaknesses. The differentiation comes from how much of the country they cover, how often they update, and what normalization work they do before delivering records.

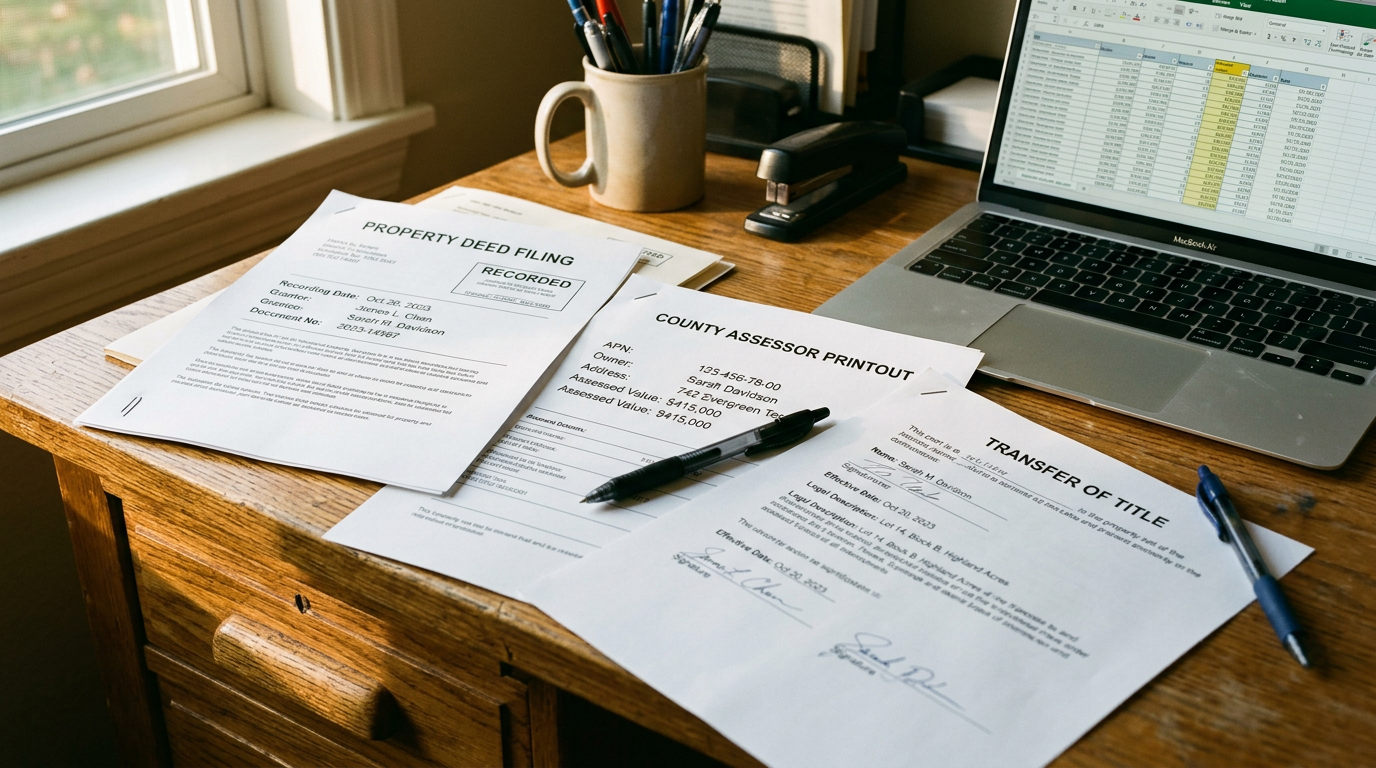

Public Records: County Recorders and Tax Assessors

Every property transfer in the United States generates a public record. When a deed is recorded at the county recorder's office, it becomes part of the permanent public record. Tax assessors, which operate at the county level, maintain separate records that tie ownership information to assessment values and tax history. Together, these two record types form the authoritative foundation for any property sales database.

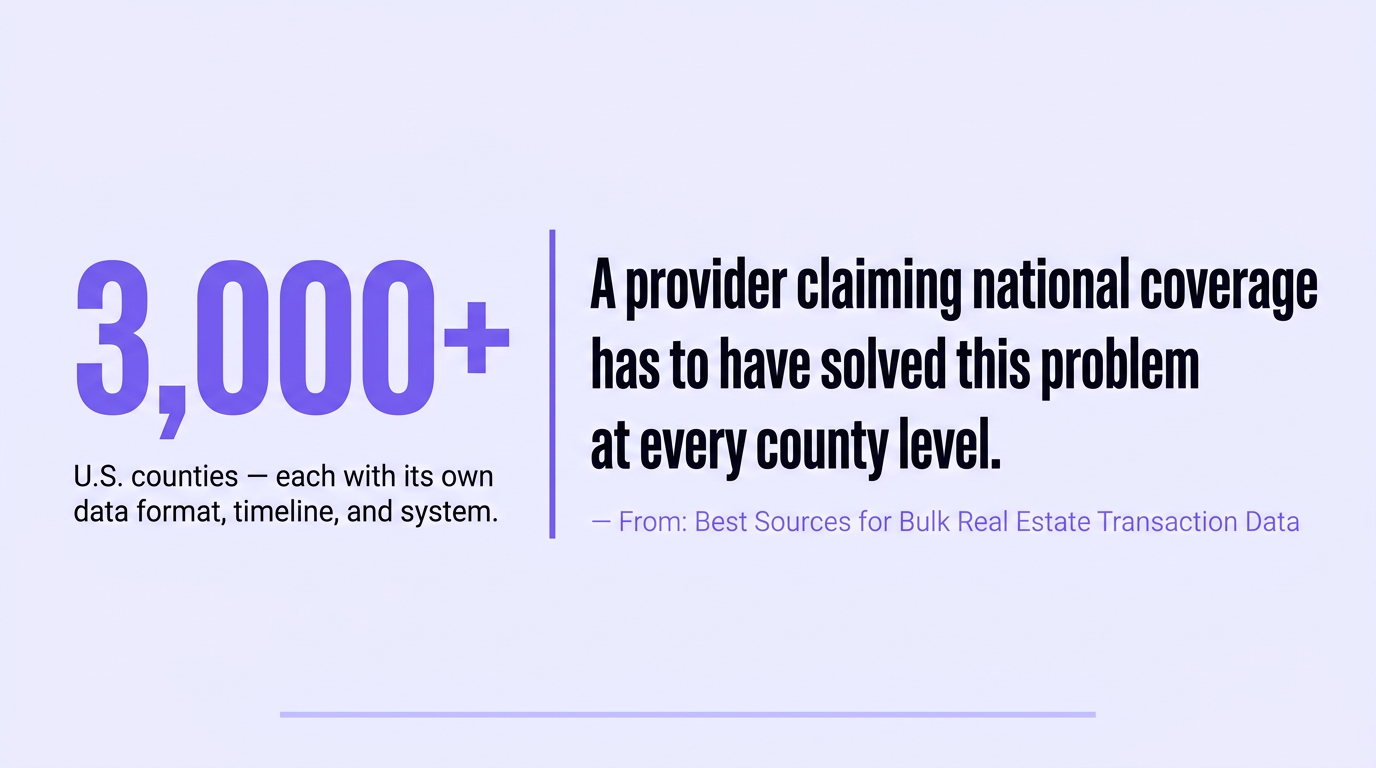

The challenge with public records is that the U.S. has over 3,000 counties, each with its own system, digitization timeline, and data format. Some counties provide daily electronic feeds. Others require manual retrieval of physical documents. A provider claiming national coverage has to have solved this problem at every county level, including the rural markets and smaller jurisdictions where digitization is slowest. The fragmentation of county-level data is the primary reason building a bulk real estate transaction data feed in-house is prohibitively expensive for most teams.

Commercial Data Aggregators

Commercial aggregators sit between public records and end users. They handle the county-by-county collection problem, normalize field names across jurisdictions, resolve entity names, and deliver the data through APIs or bulk file transfers. The value is not access to data that was otherwise unavailable but rather access to data that has already been collected, cleaned, and structured for programmatic use.

Aggregator quality varies significantly, particularly on two dimensions: the completeness of their county coverage and the consistency of their schema across property types. A provider that covers, say, 95% of U.S. counties may sound nearly complete, but those missing counties are not randomly distributed. They tend to cluster in rural markets and secondary metros where data collection is harder.

Teams that discover coverage gaps after integration have already built query logic, normalization pipelines, and dashboards around the assumption that the data is complete. When those gaps surface in production, the cost of remediation is high. True national access, with consistent coverage across all property types, is what separates a genuinely scalable real estate data integration from one that breaks as portfolios grow.

Data Marketplaces and Resellers

A third category sits between aggregators and end users: data marketplaces and resellers that package and redistribute data from primary providers. These can be useful for discovery and comparison shopping, but they add a layer of abstraction that affects update frequency, schema consistency, and support responsiveness. If an issue arises with a specific field or coverage area, the reseller may have limited ability to address it at the source.

Marketplaces are also more likely to expose data through a one-size-fits-all pricing structure that does not align with how bulk data is actually consumed. A team running large batch pulls for model training has different economics than a team doing real-time lookups for a SaaS product. Providers that charge per record delivered, rather than per query attempted, are better suited to high-volume workflows where query efficiency cannot be guaranteed.

The scale of potential bulk access demand is significant: the National Association of Realtors tracks millions of existing home sales annually across all U.S. markets, each generating a deed record and an ownership transfer event. Any platform operating nationally needs a data layer designed for that kind of volume.

Why National Coverage Is the Real Differentiator in Bulk Transaction Data

Coverage is the evaluation criterion that gets the least attention during the buying process and causes the most problems after integration. It is easy to confirm that a provider has data. It is harder to confirm that their data has no meaningful gaps until your application is running in production.

The Hidden Cost of Regional Packaging

Many providers structure access by geography. They sell a national product in theory but deliver it through regional packages, metro bundles, or state-level tiers that require separate agreements and sometimes separate API integrations. A team building a platform that needs to cover all 50 states with consistent query behavior will encounter two problems with this model.

First, the contracting overhead. Multiple agreements, each with their own pricing, renewal terms, and support contacts, create administrative burden that grows with the number of markets covered. A portfolio that starts in three states and expands to ten requires revisiting every contract. At 20 states, the management overhead is substantial. Providers that bundle regional access separately are not designing for scale. They are designing for their own revenue model, not for the operational reality of a growing data team.

Second, the query inconsistency. When regional packages come from different data pipelines, field completeness, update frequency, and normalization logic can vary between geographies. A sale price field that is reliably populated in the Southeast coverage area may have higher null rates in the Midwest bundle if those markets draw from different upstream sources. Building reliable downstream logic on top of inconsistent upstream data requires defensive coding, validation layers, and ongoing monitoring. None of that engineering work adds business value. It is friction created by the data provider's architecture, not by the complexity of the problem being solved.

What True National Access Requires

Genuine national coverage for bulk real estate transaction data means the same schema, the same update frequency, the same API behavior, and the same pricing structure regardless of which county the record comes from. It means residential, commercial, and industrial property types under a single integration rather than separate products with separate documentation. A developer querying for multifamily transactions in Phoenix and industrial transactions in rural Georgia should get structurally identical responses without writing different query logic for each.

The documentation quality of a provider often signals how well their coverage actually holds up. Providers with fragmented backends tend to have fragmented documentation, because each regional dataset or property type has its own edge cases and field behavior that a centralized document cannot cleanly describe. When documentation requires a sales conversation to understand basic query structure, that is usually a sign that the data itself is inconsistent. Well-structured publicly accessible API documentation that covers all property types with consistent field definitions is a reliable indicator that the provider has done the normalization work upstream.

Full national access without geographic packaging also eliminates the scaling problem entirely. A team that signs one agreement, integrates one API, and builds one query pattern does not need to renegotiate when their coverage needs expand. The platform scales with them. That is the structural advantage of a provider designed for national bulk access from the start, not one that assembled regional datasets over time and packaged them as a unified product.

6 Questions to Ask Any Bulk Real Estate Transaction Data Provider

Before committing to an integration, these questions reveal the real quality and scalability of any property sales database or housing sales API. The answers matter more than marketing materials.

- What is your county-level coverage, and how do you handle gaps? Ask for a specific number, not a claim like "nationwide." Providers with genuine national coverage can tell you exactly how many counties they cover and what their process is when a county has a data gap or outage. Vague answers are a signal.

- Which property types are included, and are they under the same API? Residential-only coverage is inadequate for most institutional or multi-use applications. Ask explicitly whether commercial and industrial records come through the same integration or require a separate product with different pricing and documentation.

- How does your pricing model work at scale? Per-record pricing, where you pay only for records actually delivered, is preferable to per-request models that charge for every query regardless of whether it returns data. At bulk volumes, that distinction has a significant effect on total cost.

- What is your update frequency by data type and geography? Recording date data from county recorders typically updates on different cycles than tax assessor ownership records. Ask whether update frequency is consistent across regions or whether some markets lag others. Lagging data in specific geographies is a symptom of coverage gaps.

- Which specific fields are available for transaction and ownership records? Get the actual field list, not a category description. The difference between "buyer and seller information" and actual grantee/grantor name fields, document numbers, and deed types determines whether the data supports chain-of-title analysis or only surface-level sale event tracking.

- Can I explore the data before committing to an integration? Providers with a visual portal for browsing available records and testing query logic before writing integration code let you verify coverage and field completeness against your actual use case. If a provider requires a full API integration before you can see what the data actually looks like, that is a meaningful risk to take on before you know the data meets your requirements.

Frequently Asked Questions

What Is Bulk Real Estate Transaction Data Used For?

Bulk real estate transaction data is used to build investment models, train automated valuation systems, power underwriting workflows, detect fraud patterns, and support market intelligence dashboards. The common thread across all of these applications is that they require large volumes of historical and current records rather than single-property lookups. Any platform that needs to analyze trends, score portfolios, or train machine learning models on property behavior requires bulk access rather than on-demand API queries.

What Fields Should a Property Sales Database Include?

A well-structured property sales database should include at minimum: sale price, recording date, document number, APN or parcel ID, grantee and grantor names, deed type, legal description, and prior sale information. More complete records also include mortgage amount, lender information, lien data, and tax assessment values at the time of sale. The presence of grantee and grantor fields, specifically, determines whether chain-of-title analysis and entity-level ownership tracking are possible.

How Is Real Estate Ownership Data Different From Transaction Data?

Transaction data captures sale events: when a property transferred, at what price, and between which parties. Real estate ownership data captures the current state of who holds the deed, for how long, and under what ownership structure. Both are useful for different purposes. Transaction data supports trend analysis, market modeling, and historical research. Ownership data supports prospecting, portfolio analysis, and investment targeting based on characteristics like ownership duration or entity type. Most serious applications require both, which is why a single provider covering both datasets under one integration is more efficient than sourcing them separately.

What Is a Housing Sales API, and How Does It Differ From Bulk File Delivery?

A housing sales API lets you query transaction and ownership records programmatically in real time, useful for lookups, enrichment workflows, and applications where you need current data on demand. Bulk file delivery, typically via SFTP, S3, or a data warehouse integration, provides large datasets delivered on a scheduled basis, suited for model training, database seeding, and analytics workloads. Many production data architectures use both. The API handles real-time lookups; bulk delivery handles the historical dataset that feeds models and dashboards. The best providers support both access patterns through the same account and pricing structure.

Choosing the Right Source for Bulk Real Estate Transaction Data

The quality of a bulk real estate transaction data source is not primarily determined by the fields on the spec sheet. It is determined by whether that coverage holds consistently across every geography you need, every property type your application serves, and every query volume your system generates. Regional packaging, residential-only coverage, and opaque update cycles are not minor limitations. They create engineering problems that compound as your platform scales.

Teams that evaluate providers on national coverage first, and verify field completeness second, avoid the most costly integration mistakes. Documentation quality, pricing transparency, and the ability to explore data before committing to integration are secondary signals that confirm whether a provider has actually built what they claim.

Datafiniti offers structured bulk real estate transaction data with full national coverage across residential, commercial, and industrial property types, all under a single API integration with per-record pricing and no rate limiting. To see what is available for your use case and verify coverage before you build, get in touch with the team.

Read the latest articles

How to Get the Most out of a Product Catalog API

Learn how to leverage a product catalog API for business growth. Discover data quality, access methods, and strategy for your product catalog API.

Taming Your Ecommerce Product Data Feed

Optimize your ecommerce product data feed for growth. Learn strategies, leverage technology, and ensure data quality for better customer experience and AI initiatives.

Streamlining Your Product Search with an API

Explore the benefits and integration of a product search API. Streamline your product discovery and leverage data for business growth.

.png)

Real Estate Transaction Databases: What You Need to Know

.png)

Best MLS API for Real Estate Software: What Developers Need

.png)

How Real Estate Platforms Access MLS Database APIs

.png)

Commercial Real Estate API vs. Residential Property API

.png)

How to Choose a Real Estate Database API for Your MVP

MLS API vs. IDX: What's the Diff?

MLS API vs IDX: Explore the differences in real estate data access, retrieval, and integration. Understand which solution fits your needs.

Scraping vs. APIs: Getting Real Estate Data

Compare web scraping vs real estate API for data acquisition. Learn the pros, cons, and best use cases for each method.

Cracking the Code: Housing Sales Insights

Unlock housing sales analytics insights with Datafiniti. Explore property data, market trends, and advanced techniques for strategic decisions.

Property Valuation API: Your Go-To Real Estate Tool

Leverage the property valuation API for real estate insights. Access comprehensive property data for diverse applications with Datafiniti.

Product Data APIs Explained

Learn about product data APIs explained. Discover how to access, integrate, and utilize product data for e-commerce, analytics, and more.

Unlocking Your Ecommerce Data with APIs

Unlock ecommerce data with APIs for business insights, product catalog enrichment, and competitive analysis. Explore data via portal or API.

Your Guide to Housing Sales APIs

Explore housing sales API data for insights. Access property data, integrate into applications, and gain business intelligence. Get started today!

Real Estate Ownership Data: How to Access, Analyze and Use at Scale

Access, analyze, and use real estate ownership data at scale. Learn how to find, process, and leverage this crucial information for business insights.

Unlocking Opportunities: Navigating Bulk Real Estate Transaction Data

Unlock opportunities with bulk real estate transaction data. Learn how to access, analyze, and leverage property data for investing, marketing, and more.

What Is a Property Sales Database?

Explore what a property sales database is, its core components, how to access data, and key use cases for real estate analysis and more.

Benefits of Obtaining Housing Transaction Data

Unlock insights with housing transaction data. Analyze markets, investments, sales, and risk. Get comprehensive property data for informed decisions.

Understanding Real Estate Transaction Databases

Explore real estate transaction databases: understand data components, access methods, and leverage property data for insights and advanced applications.

IDX vs MLS API: What Every Real Estate Professional Should Know

Understand IDX vs MLS API differences. Learn about data access, integration, and how Datafiniti's solutions empower real estate professionals.

What Is an MLS Database API?

Explore the MLS database API: understand its components, benefits, and how to access real estate data for various applications. Learn about its core functionality and technical aspects.

How a Property Database API Can Help Real Estate Pros

Learn how a property database API can help real estate pros analyze trends, monitor listings, and optimize strategies. Get data insights.

What Is a Residential Property API?

Explore what a residential property API is, its features, benefits, and real-world applications for real estate professionals and investors.

What Is a Commercial Real Estate API?

Explore commercial real estate API functionality, data integration, and use cases. Learn how to leverage property, business, and people data for insights.

Understanding MVP Data Integration

Learn about MVP data integration, its components, benefits, and strategies for accessing and utilizing data resources effectively.

How to Choose the Best Property Data API

Learn how to choose the best property data API. Explore features, providers, pricing, and integration for real estate insights.

Real Estate Database API: What to Look for

Explore real estate database API options. Learn about data quality, features, and how to choose the right provider for your needs.

.png)

Real Estate Transaction Database: An API Access Guide

How Do Product Data APIs Work?

Understand how a product data API works, its key features, integration methods, and applications for e-commerce and business intelligence.

How Do Data Aggregation Platforms Work?

Explore how data aggregation platforms work, their capabilities, and applications. Learn to choose and implement the right platform for your business intelligence needs.

Why Do Companies Need Property Data Aggregation?

Discover why property data aggregation is crucial for businesses. Streamline access, empower functions, enhance risk management, and drive strategic decisions with authoritative insights.

.jpeg)

Best MLS Database APIs for Real Estate Software Integration

.jpeg)

Product Search API vs. Product Data API: What's the Difference?

What Are the Best MLS Data API Features to Look For?

Discover the best MLS data API features, including real-time updates, bulk downloads, and flexible filtering for property data.

What Is a Product Data API?

Explore the functionality and benefits of a product data API. Learn how to integrate, leverage, and choose the right provider for your business insights.

What Is the Difference Between Product Search API and Product Data API?

Understand the difference between Product Search API and Product Data API. Learn how to leverage product data for business intelligence and analytics.

Guide to Accessing Real Estate Transaction Database Via API

Access real estate transaction data via API. Explore property insights, sales, underwriting, and advanced applications with our authoritative guide.

Is a Real Estate MLS API Beneficial?

Explore the benefits of a real estate MLS API for enhanced data access, streamlined workflows, and market responsiveness. Learn about key features and use cases.

What is an MLS Database API?

Explore the MLS database API for comprehensive property data access. Learn about its core functionality, key features, and integration into real estate technology.

What Is a Property Data API?

Explore the capabilities of a property data API. Understand its core functionality, key features for developers, and how to access property information at scale for business insights.

.jpeg)

Real Estate API Pricing: What You Need to Know Before You Build

Choosing a real estate API based on price alone can backfire. Learn how pricing models work, uncover hidden costs, and evaluate the true total cost before you build.

.jpeg)

How to Choose a Property Market API for Investment Platforms

Choosing the right property market API is critical for investment platforms. Learn how to evaluate data depth, coverage, freshness, and integration quality before you commit.