Unlocking the MLS Database with APIs

Unlock the MLS database with APIs. Learn how to access property data, gain real estate insights, and integrate MLS data for your business needs.

.png)

Key Takeaways

A real estate transaction database is only as useful as the data it contains, the coverage it offers, and the pricing model that governs how you access it.

Before you integrate a property sales data API into your product, download our buyer's checklist and pressure-test every provider against it.

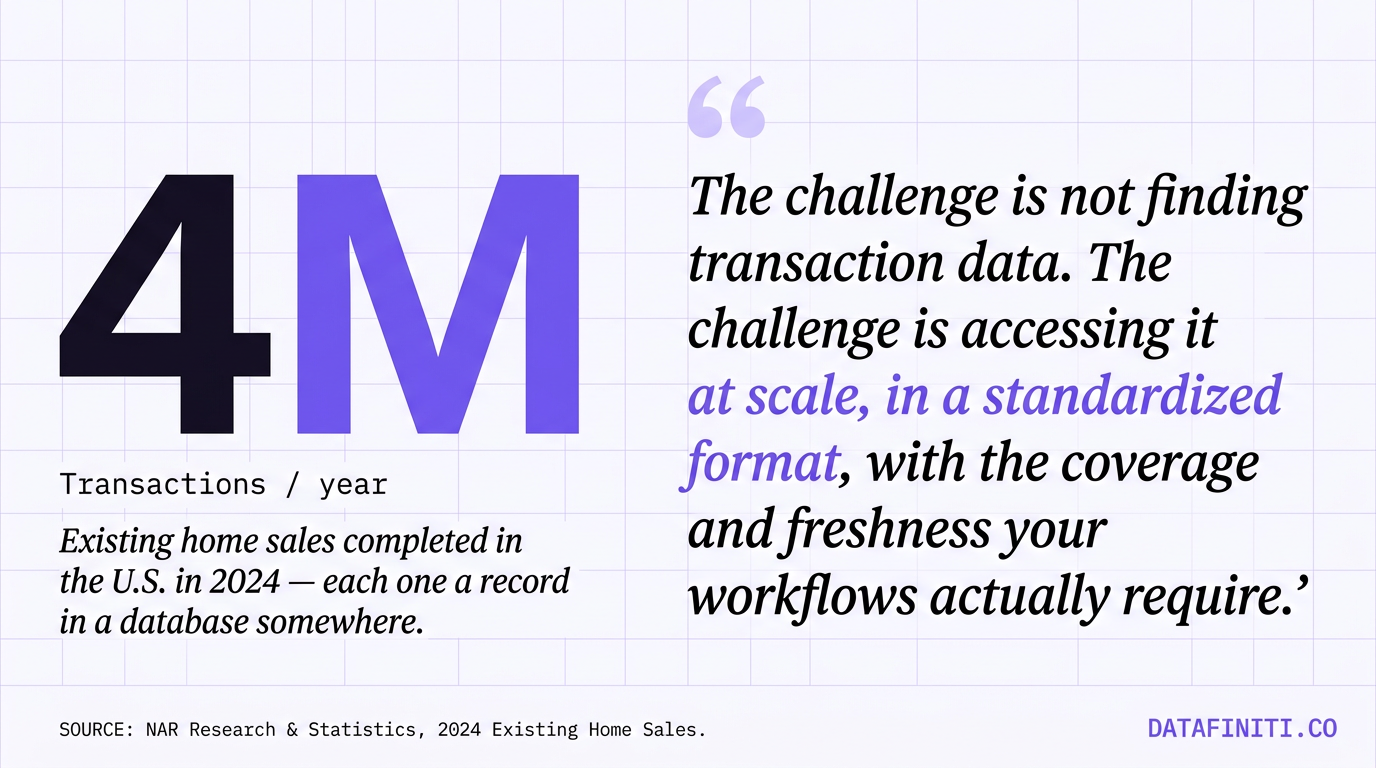

Every time a property changes hands in the United States, a record is created. That record captures the sale price, the parties involved, the financing terms, the property characteristics, and the legal documentation that makes the transfer official. Multiply that by the roughly four million existing home transactions completed in the U.S. in 2024 alone, add commercial and industrial closings, and you end up with a data asset that underpins nearly every serious PropTech product, investment model, and analytics platform in the market. A well-structured real estate transaction database is the foundation for automated valuations, ownership verification, fraud screening, and market intelligence.

The challenge is not finding transaction data. Public records exist in every county in the country. The challenge is accessing it at scale, in a standardized format, with the coverage and freshness your workflows actually require. This guide covers what a real estate transaction database contains, how the data gets there, what separates a genuinely useful one from a frustrating one, and what to evaluate before you commit to a provider.

This type of structured dataset converts raw public records, which are filed across thousands of county offices in inconsistent formats, into a queryable, standardized collection. The resulting data can be accessed through an API, a web portal, or bulk downloads depending on the provider.

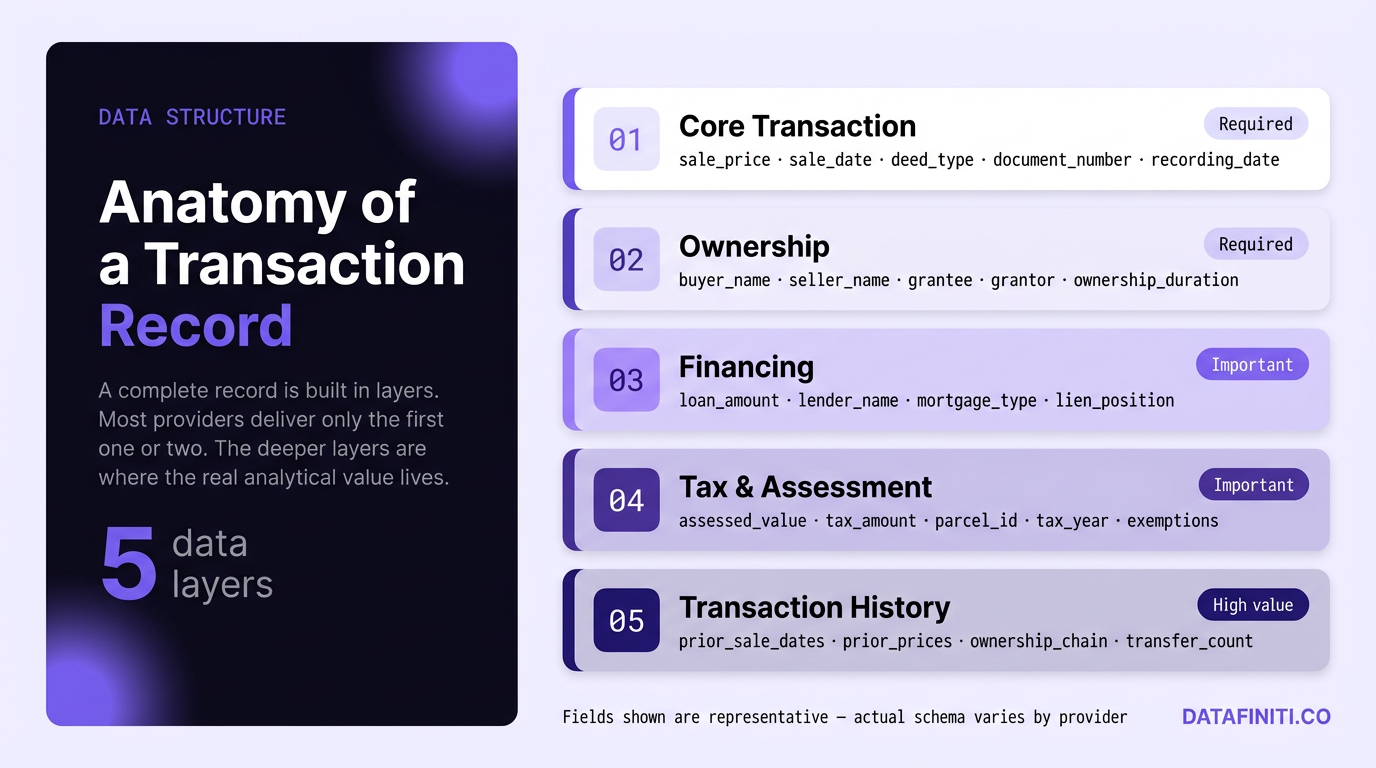

The depth of a real estate transaction database varies significantly across providers, but any dataset worth integrating should include a consistent set of foundational fields. Sale price and date are the bare minimum. More complete records extend into financing terms, deed type, buyer and seller names, grantee and grantor information, and the document number that ties the record back to the official county filing.

Ownership history is a critical layer that separates transactional data from truly useful housing transaction data. Knowing that a property sold for a certain amount is valuable. Knowing it changed hands three times in six years, with each transaction's financing structure, tells a far more complete story for valuation models, investment screening, and fraud detection workflows. Tax assessment history rounds out the picture, giving teams access to assessed values, tax amounts, and parcel-level identifiers that enable joins with other datasets.

Property characteristics, while not strictly transactional, are almost always included alongside transaction records in a well-structured database. Square footage, lot size, bedroom and bathroom count, property type, year built, and zoning classification provide the context needed to make sense of any given sale price. Without them, transaction records are largely uninterpretable for analytics purposes.

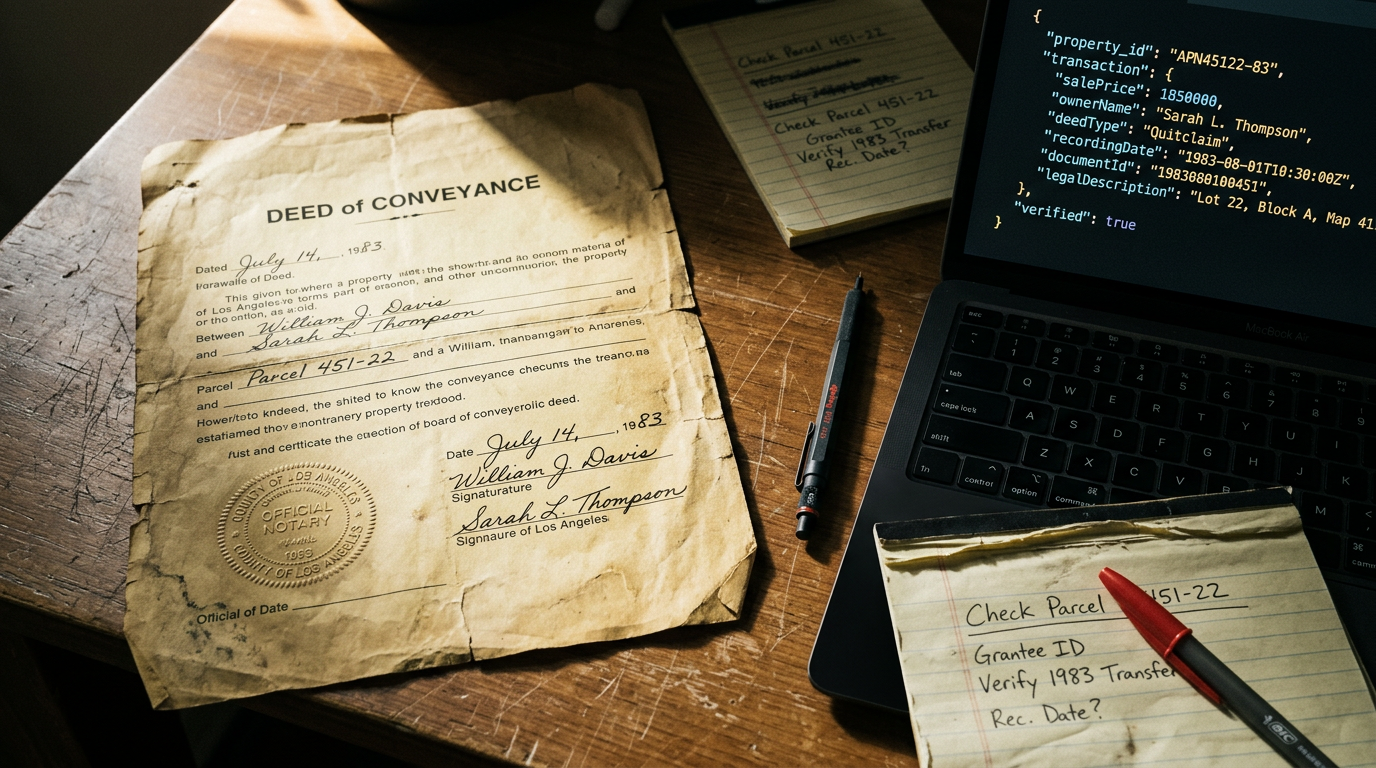

The primary source for real estate transaction data is the public record system. County recorder and assessor offices document every deed, mortgage, lien, and transfer of ownership. This data is legally public in all U.S. states, though access methods and record formats vary dramatically by jurisdiction. Some counties publish data online. Others require in-person requests or licensed data distribution agreements.

Because of this fragmentation, most organizations rely on data aggregators rather than building direct county integrations themselves. Aggregators collect from thousands of county sources, standardize the schema, deduplicate records, and deliver the result through a unified API. The quality of the aggregation process determines how consistent, complete, and current the resulting data will be in production. Teams that want to evaluate coverage before committing can explore the full property dataset without a long onboarding cycle.

Some providers supplement public records with MLS data, listing platform feeds, and web-collected property data. This multi-source approach helps fill gaps in county data and adds listing history, price changes, and current market activity that public records alone do not capture. The tradeoff is that multi-source pipelines require more sophisticated deduplication and normalization to prevent conflicting values from appearing in the same record.

The PropTech market is expanding at a significant pace. The U.S. PropTech market is expected to grow from $21.5 billion in 2025 to nearly $77 billion by 2034. That growth is almost entirely dependent on access to reliable, structured property data, and transaction history is the most frequently requested data layer across nearly every category of PropTech product.

Automated valuation models (AVMs) are among the most data-intensive applications in real estate technology. Every AVM needs comparable sales data, cleaned and standardized, to produce reliable estimates. Development teams building or maintaining AVM pipelines query comprehensive ownership and transaction data continuously, often at national scale, to keep model inputs current. Any gap in transaction coverage directly degrades model accuracy.

Investment platforms use transaction history to identify acquisition targets, track portfolio performance, and screen for distressed assets. A property that shows multiple transfers in a short period, or a sale price well below assessed value, is a signal worth investigating. That signal only surfaces if the transaction record is complete, properly dated, and matched to the right parcel identifier.

Fraud detection and identity verification are increasingly reliant on ownership data APIs. Lenders, insurance carriers, and e-commerce platforms use property ownership records to validate identities, verify addresses, and screen applications. A shipping address that does not match any ownership record, or a listed property owner whose tenure began unusually recently, can be flagged before a transaction completes. These workflows require high-confidence ownership data tied to verifiable transaction history.

The cost of poor transaction data is not abstract. An AVM built on incomplete comparable sales produces systematically biased estimates that erode user trust over time. A fraud screening model that relies on patchy ownership records generates false positives that delay legitimate transactions and false negatives that let fraudulent ones through. A PropTech product that works reliably in one metro but fails in another because its data provider has uneven geographic coverage creates a support burden and churn risk that is difficult to manage.

Data quality issues also compound at the pipeline level. A single malformed or missing parcel identifier causes downstream joins to fail silently. Duplicate records without proper deduplication inflate apparent transaction volume. Ownership chains with gaps in the middle make chain-of-title analysis unreliable. Every one of these problems lands on the engineering team, and none of them are visible until the product is already in use.

The right data provider eliminates most of these issues before they reach your codebase. Standardized schemas, deduplicated records, and consistent field coverage mean less pre-processing work and fewer edge cases to handle in production.

Not every provider includes the same fields, and field depth varies significantly even within the same dataset. The table below categorizes common transaction record fields by how essential they are for typical use cases.

Unlocks investment signals, distressed asset detection, trend analysis

Providers that restrict access to core transaction fields while selling deeper layers as add-ons fragment the dataset at the integration level. You end up paying multiple times to reconstruct a record that should be unified from the start.

Evaluating a provider requires more than checking whether they have coverage in your target market. The questions below separate databases that work reliably in production from those that look good in a sales demo.

The database itself is only half the evaluation. How you access that data, on what terms, and with what constraints shapes the actual developer experience and the long-term cost of operating your product. Three dimensions stand out as the most consequential in practice.

Usage-based API pricing splits into two models: per-request, where you pay for every query regardless of results, and per-record, where you pay only for data actually delivered. For high-volume workflows like AVM pipelines or fraud screening systems, the difference is material. Failed queries, empty result sets, and retries after network errors are all billable under per-request billing — and they add up fast at production scale. Per-record pricing eliminates that overhead entirely.

Rate limiting is a constraint that rarely gets discussed upfront in a vendor evaluation, but it has a significant effect on how much engineering work is required before a production integration goes live. APIs with requests-per-second or requests-per-minute caps require developers to implement throttling logic that paces outgoing calls to stay under the limit. Any workload that needs to process records faster than the rate limit allows requires a queuing system and a retry handler on top of the core integration.

For teams evaluating an ownership data API, throttling constraints carry both a development cost and an ongoing maintenance burden. Throttling logic interacts with infrastructure scaling, error handling, and monitoring in ways that create a surface area for bugs. When the API's rate limit policies change, which they do, that code needs to be revisited. None of this is necessary if the API does not impose rate limits in the first place.

The absence of rate limiting should be a specific question, not an assumption. Some providers advertise unrestricted access, then impose soft limits that surface only under production load. Get it confirmed in writing and test against it during your trial period.

Geographic coverage restrictions are the most common source of scaling friction for PropTech companies that grow beyond their initial market. A provider that packages data by region, state, or metro creates a situation where expanding coverage requires renegotiating contracts, purchasing add-on packages, and potentially dealing with inconsistent field schemas across geography bundles. A product that performs well in California does not automatically perform the same way in Georgia if the coverage packages are sourced and structured differently.

Full national access under a single integration eliminates that friction. One API key, one schema, one contract, full U.S. coverage. There are no metro packages to stack, no volume discounts that only apply to certain regions, and no need to audit your data pipeline for geographic gaps as your user base expands. For teams building products designed for national deployment, this is not a nice-to-have. It is a structural requirement that affects every part of your data architecture, from ownership and identity verification to property-level transaction analysis.

Property type coverage deserves equal attention. A database that covers residential transactions thoroughly but lacks commercial and industrial records is not a complete real estate transaction database. It is a residential transaction database. Teams building products for mixed-use markets, commercial investors, or industrial operators cannot rely on it without supplementing with additional providers, which reintroduces the integration complexity they were trying to avoid.

The table below illustrates how the two dominant API pricing models compare across the factors that matter most in a production environment.

Per-request models carry a higher cost at scale and require engineering investment upfront, before any real usage begins, to operate reliably. Per-record credit models eliminate that pre-build tax entirely.

Use this checklist to evaluate any real estate transaction database or data API before integrating it into your product or workflow. Print it, share it with your team, or run through it on a discovery call with a prospective vendor.

A vendor that cannot answer most of these questions clearly is signaling something about how their product operates in production. Take that seriously before you build on top of it.

These are the questions data and engineering teams most commonly ask when evaluating a transaction data provider for the first time.

This type of structured dataset is built from completed property sales, typically sourced from county recorder and assessor public records. MLS data covers active and recently sold listings managed by real estate brokerages and is generally restricted to licensed participants. Transaction databases are broader in scope, cover all property types, and include historical ownership chains that MLS feeds typically do not provide. For analytics, valuation, or fraud detection, transaction database access is more complete and more accessible than MLS-based sources.

It depends heavily on the use case. Fraud screening and active investment monitoring require the most current data available, ideally updated daily or weekly as county records are filed. Automated valuation models can typically operate on monthly or quarterly refreshes without significant accuracy degradation, since comparable sales data does not change retroactively. If your product involves real-time decisions, confirm the exact refresh cadence and lag time between a county filing and when that record appears in the database.

It depends on the provider. Many databases are primarily residential and include commercial or industrial transactions as limited add-on tiers, often with less field depth and less frequent updates. A genuinely comprehensive dataset should cover all property types under a single schema with consistent field coverage. If you are building a product that will eventually serve commercial investors, industrial operators, or mixed-use markets, verify property type coverage before you integrate rather than discovering the gap when you need to expand.

A solid transaction API response should include sale price, sale date, deed type, buyer and seller names, parcel identifier, and basic property characteristics at minimum. A more complete record adds financing details, prior transaction history, tax assessment data, and ownership chain information. The parcel identifier is particularly important because it is the key that enables joins with other datasets. If a provider's transaction records do not include a consistent parcel ID, cross-referencing with tax records, zoning data, or listing history becomes substantially more difficult.

Your data foundation shapes every product or workflow built on top of it. Incomplete transaction records produce unreliable valuation models. Patchy ownership data creates gaps in fraud screening. Regional coverage restrictions generate friction as products scale to new markets. And pricing models that charge per request rather than per record turn high-volume data workflows into unpredictable cost centers.

The questions in the checklist above are not edge cases. They are the variables that determine whether a data integration runs smoothly in production or becomes a persistent source of engineering issues. The best time to ask them is before you commit, during a trial period where you can validate coverage and field depth against your actual use cases.

Datafiniti's property data platform gives developers and data teams access to a comprehensive real estate transaction database covering residential, commercial, and industrial properties nationwide, with per-record pricing, no rate limiting, and a consistent schema across all geographies. There are no regional packages to stack, no charges for empty queries, and no throttling logic required before you can go to production. Get in touch to get started and see what your product can do with clean, accessible transaction data.

Unlock the MLS database with APIs. Learn how to access property data, gain real estate insights, and integrate MLS data for your business needs.

Discover how property APIs with ownership details empower real estate tech startups. Learn about data integration, risk mitigation, and driving business value.

Unlock the value of product catalog sync for product managers. Streamline data, improve decisions, and reduce costs with real-time insights.

Learn about product data webhooks, their components, and how they enable real-time updates for business intelligence and workflow automation.

Unlock insights with product data API integration. Essential for analysts & product managers to streamline data access & enhance product strategy.

Learn about product data APIs, their benefits, and how they drive business growth. Explore integration and advanced use cases.

Learn what ecommerce data vendors do, their services, and how to choose the right one for your business growth.

Compare product data providers. Learn what to look for in data quality, structure, and integration features.

Learn how to leverage real-time product data APIs for e-commerce, competitive analysis, and AI. Get instant access to clean, structured product data.

Find the best product data API with real-time updates, comprehensive coverage, and a user-friendly portal. Explore features to look for.

Access property data with a powerful property database API. Explore listings, market analysis, investment opportunities, and more. Get started today!

Unlock commercial real estate insights with a powerful API. Access property data, streamline workflows, and enhance investment strategies.

Learn how to get a real-time product feed using an API. Access, leverage, and ensure accuracy of product data for your business needs.

Learn how to gather and analyze competitor pricing data to inform your business strategy. Understand key components and ethical considerations.

Enhance your product data with comprehensive enrichment. Discover insights, drive growth, and choose the right approach for your business.

.png)

.png)

.png)

.png)

.png)

Learn how to leverage a product catalog API for business growth. Discover data quality, access methods, and strategy for your product catalog API.

Optimize your ecommerce product data feed for growth. Learn strategies, leverage technology, and ensure data quality for better customer experience and AI initiatives.

Explore the benefits and integration of a product search API. Streamline your product discovery and leverage data for business growth.

.png)

.png)

.png)

.png)

.png)

MLS API vs IDX: Explore the differences in real estate data access, retrieval, and integration. Understand which solution fits your needs.

Compare web scraping vs real estate API for data acquisition. Learn the pros, cons, and best use cases for each method.

Unlock housing sales analytics insights with Datafiniti. Explore property data, market trends, and advanced techniques for strategic decisions.

Leverage the property valuation API for real estate insights. Access comprehensive property data for diverse applications with Datafiniti.

Learn about product data APIs explained. Discover how to access, integrate, and utilize product data for e-commerce, analytics, and more.

Unlock ecommerce data with APIs for business insights, product catalog enrichment, and competitive analysis. Explore data via portal or API.

Explore housing sales API data for insights. Access property data, integrate into applications, and gain business intelligence. Get started today!

Access, analyze, and use real estate ownership data at scale. Learn how to find, process, and leverage this crucial information for business insights.

Unlock opportunities with bulk real estate transaction data. Learn how to access, analyze, and leverage property data for investing, marketing, and more.

Explore what a property sales database is, its core components, how to access data, and key use cases for real estate analysis and more.

Unlock insights with housing transaction data. Analyze markets, investments, sales, and risk. Get comprehensive property data for informed decisions.

Explore real estate transaction databases: understand data components, access methods, and leverage property data for insights and advanced applications.

Understand IDX vs MLS API differences. Learn about data access, integration, and how Datafiniti's solutions empower real estate professionals.

Explore the MLS database API: understand its components, benefits, and how to access real estate data for various applications. Learn about its core functionality and technical aspects.

Learn how a property database API can help real estate pros analyze trends, monitor listings, and optimize strategies. Get data insights.

Explore what a residential property API is, its features, benefits, and real-world applications for real estate professionals and investors.

Explore commercial real estate API functionality, data integration, and use cases. Learn how to leverage property, business, and people data for insights.

Learn about MVP data integration, its components, benefits, and strategies for accessing and utilizing data resources effectively.

Learn how to choose the best property data API. Explore features, providers, pricing, and integration for real estate insights.

Explore real estate database API options. Learn about data quality, features, and how to choose the right provider for your needs.

.png)

Understand how a product data API works, its key features, integration methods, and applications for e-commerce and business intelligence.

Explore how data aggregation platforms work, their capabilities, and applications. Learn to choose and implement the right platform for your business intelligence needs.

Discover why property data aggregation is crucial for businesses. Streamline access, empower functions, enhance risk management, and drive strategic decisions with authoritative insights.

.jpeg)

.jpeg)

Discover the best MLS data API features, including real-time updates, bulk downloads, and flexible filtering for property data.

Explore the functionality and benefits of a product data API. Learn how to integrate, leverage, and choose the right provider for your business insights.

Understand the difference between Product Search API and Product Data API. Learn how to leverage product data for business intelligence and analytics.

Access real estate transaction data via API. Explore property insights, sales, underwriting, and advanced applications with our authoritative guide.

Explore the benefits of a real estate MLS API for enhanced data access, streamlined workflows, and market responsiveness. Learn about key features and use cases.

Explore the MLS database API for comprehensive property data access. Learn about its core functionality, key features, and integration into real estate technology.

Explore the capabilities of a property data API. Understand its core functionality, key features for developers, and how to access property information at scale for business insights.

.jpeg)

Choosing a real estate API based on price alone can backfire. Learn how pricing models work, uncover hidden costs, and evaluate the true total cost before you build.

.jpeg)

Choosing the right property market API is critical for investment platforms. Learn how to evaluate data depth, coverage, freshness, and integration quality before you commit.